Over the past year, we have witnessed a very rapid increase in the popularity of the concept of AI Agents. As leadership teams and IT departments try to bring AI agents under strategic control, a challenge arises regarding the governance of these agents. The evolution of AI technology, and consequently, approaches to agent-based systems, has outpaced the development of regulations and guidelines for their management. However, there are already frameworks available on the market that structure some of the requirements and provide a set of guidelines for organizations seeking to undergo an agent-based transformation.

Before we start discussing specific rules, it’s worth pointing out current the most common AI legislation and standardization:

- EU AI Act: The first comprehensive legal act in the world regulating the artificial intelligence market within the European Union. It introduces a classification of AI systems based on risk (from minimal to unacceptable), imposing strict safety requirements, and human oversight for high-risk solutions.

- NIST AI Risk Management Framework: A risk management framework developed by the American National Institute of Standards and Technology. It focuses on four key functions (Govern, Map, Measure, Manage) that help organizations create reliable, safe, and resilient AI systems.

- ISO 42001: An international standard specifying requirements for an Artificial Intelligence Management System (AIMS). It is a certifiable standard that helps organizations systematically address ethical considerations, data management, and continuous improvement of AI processes within business structures.

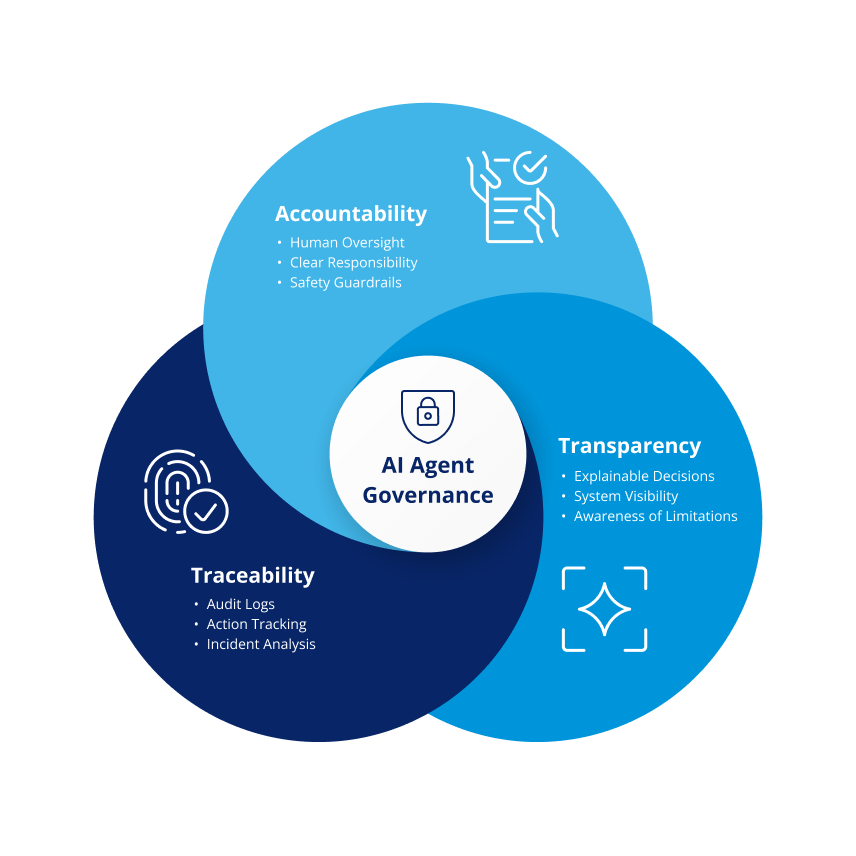

There are also other significant frameworks that are worth mentioning. These include the AI Principles of the OECD and the IEEE’s 7000 series. Analyzing these regulations, a common thread emerges: they all treat the triad of Accountability, Transparency, and Traceability as paramount characteristics of AI systems that are safe, fair, and ethical. However, they differ in how they operationalize these concepts for intelligent agents.

As organizations use more AI agents, they increasingly rely on central platforms to manage and coordinate them, either built internally or bought from vendors. These platforms must give operations and security teams tools to monitor agent behavior and enforce accountability (including audit trails), and enforcing common security policies.

Accountability: Who Takes the Responsibility?

In agentic systems that independently plan actions and utilize external tools, the traditional concept of accountability becomes blurred. However, regulations clearly state that technical autonomy is not the same as legal autonomy.

- Human-in-the-Loop: A key element of accountability is providing oversight mechanisms that allow a human to intervene or halt the agent’s actions at any time. The interaction between intelligent agents and business users is designed so that a designated owner can approve or override decisions. In this way, the responsibility for the consequences of the agent’s actions is always attributed to a specific human decision-maker who oversees the entire process. This, in turn, makes it easier for organizations to meet regulatory requirements and to resolve disputes when something goes wrong.

- Guardrails: These are systemic limitations on the agent’s freedom of action, defining its “safe operating space.” Instead of relying solely on flexible linguistic instructions, guardrails introduce hard technical and ethical limits (e.g., blocking access to sensitive databases, financial transaction limits, or security filters). Guardrails can monitor and filter both content sent by users to AI systems (e.g., to prevent misuse or protect against malicious content) and content generated by AI models (e.g., to perform basic fact-checking or verify responsibility structures, which helps to reduce hallucinations in the system). This reduces the risk of data leaks, policy violations, and unsafe outputs in production systems. The implementation of guardrails typically relies on either ready-made libraries or other AI models that have been specifically trained for this type of application.

- Accountable Entity: The practical implementation of ethics requires a clear definition of who is responsible for an agent’s errors; whether it is the model provider or the entity deploying it within a specific business process. In practice, this means the need to apply shared responsibility models and precisely define in contracts (SLAs) where responsibility for the technology ends and where responsibility for its misuse or lack of oversight of the business process begins. It must be clear whether responsibility lies with the model provider, the IT team deploying the agent, or the business unit using it. Without this clarity, organizations may face legal uncertainty when incidents occur or audits are triggered.

Transparency: How to Understand the Internals of AI Agent?

Transparency in AI agents goes beyond simply providing technical documentation. At the core of managing transparency in built AI systems lies primarily the concept of risk analysis. For most modern AI models, it is no longer possible to fully explain decisions in the traditional way, unlike earlier machine learning models where individual factors could be traced more clearly.

However, for language models, which form the core of intelligent agents, it is possible to implement certain heuristic mechanisms. These mechanisms help us understand, with reasonable confidence, why an agent acted in a certain way. These heuristics primarily include:

- Explainability: The agent must be capable of presenting a “chain of thought” that led it to a specific decision. In practical terms, this means that agent interfaces should present not only the result, but also the logical steps taken to achieve it. Since each token in an LLM depends on the previous ones, forcing the agent to reason step by step before acting makes its decisions more consistent with its explanation.

- Agent Status Monitoring: Customers or employees interacting with an AI agent must be aware that they are not speaking to a human. Most modern regulations treat this as a minimum ethical requirement, and having it in place protects organizations from accusations of deception and helps preserve user trust. This guideline is particularly important in either purely autonomous systems, or in voice interfaces (e.g., VoiceBots on support lines), where the user may not be aware of the entity they are interacting with.

- Limits of Competence: Product teams and system owners must clearly communicate the limitations of the model to end users. At C&F, our goal is to build AI solutions that can be trusted. Our solutions utilize many advanced techniques to limit hallucinations. Because AI systems are based on probabilities, occasional errors are unavoidable. For this reason, the user should be aware of this possibility. And that’s why they must be informed and must know when the agent is operating outside of its safe application boundaries.

Traceability and Auditability: How to Analyze the History?

The third pillar of the discussed triad, auditability, ensures that accountability and transparency, as characteristics, are available not only during the agent’s operation, but also retrospectively, for analyzing its execution history. For data and engineering teams, collecting data about agent actions is both a regulatory requirement and a way to improve agent behavior over time. Teams can use historical interaction data to improve agent performance and align it with company policies and decision rules.

Here is a list of three essential elements for implementing auditability for intelligent agents:

- Logging (Forensic Logging): Every action taken by the agent: from sending a request to an API to modifying a file, should be recorded in an immutable and tamper-proof manner. In practice, this means collecting the full operational context: from the raw input prompt, through model metadata (version, temperature, seed), to specific parameters of external tool calls. This approach allows for a thorough “post-mortem” analysis in the event of damage, an error, or a security breach. It also shortens investigation time and supports formal incident reporting and audits. These elements are similar to how, for example, Audit Trails have been built in systems subject to regulations such as GxP.

- Reproducibility: While the nature of AI-based systems is not fully deterministic, auditability requires striving to understand the context in which a decision was made. In practice, this means striving for “virtual reproducibility.”; recreating the conditions that led to making a specific decision. This is critical for demonstrating compliance and for distinguishing system failures from misuse. For intelligent agents using LLMs, this primarily involves implementing knowledge versioning mechanisms (in knowledge bases in RAG architectures) and system instructions. Additionally, for tools or agents based on traditional ML models, this set should also include freezing model versions and logging seeds (if possible). This makes it possible to recreate the state of the system at the time the decision or behavior being investigated was made, allowing you to distinguish between a model AI error, intentional manipulation, or an error in the input data.

- Agent Drift Analysis: In complex systems, auditability means being able to detect when an agent’s behavior starts to drift away from what it was designed to do. This means using automated checks to compare the agent’s responses with trusted reference data and to monitor whether its reasoning stays consistent over time. Detecting these changes early makes it easier to adjust guardrails or update knowledge sources before the agent behaves in unintended or unsafe ways.

Governance is Foundational for Trustworthy AI Agents

As AI agents are increasingly integrated into various aspects of an organization’s operations, there is a practical need to implement oversight mechanisms for these solutions. The goal of these mechanisms is to ensure long-term security and build user trust in their operation.

Regulations and best practices provide a practical foundation for deploying AI agents in a safe and controlled way. The triad of accountability, transparency, and traceability forms a closed ecosystem:

- We must know who is responsible for the system (Accountability).

- We must be able to see what the system is doing and why (Transparency).

- We must be able to trace the sequence of events after they have occurred (Traceability).

In our opinion, in the future, as many systems will be based on autonomously operating AI agents, trust and governance will play a growing role in whether these systems succeed. In practice, this means that organizations which embed governance early will scale AI agents more safely and with fewer operational disruptions.

Would you like more information about this topic?

Complete the form below.